Kyivstar went dark on December 12, 2023. The attackers had been inside Ukraine's largest telecommunications carrier (the network that served more than half the country) for at least six months before they pressed the button. When they did, they did not steal information. They destroyed infrastructure. The destruction was timed to coincide with a moment of maximum strategic value to Russia and maximum harm to Ukraine. The attack was not the reconnaissance. The reconnaissance had happened months earlier, quietly, while Kyivstar's defenders saw nothing unusual because there was nothing unusual to see.

Two months later, on February 7, 2024, the three principal American cybersecurity agencies issued a joint advisory describing a campaign called Volt Typhoon. A group linked to the People's Liberation Army had been found inside American water utilities, power grids, transportation systems, and telecommunications carriers. Some of the compromises were years old. The advisory stated, in language more direct than such advisories usually allow, that the purpose of the campaign did not appear to be espionage. It appeared to be pre-positioning, preparing to disrupt those networks at a moment of future crisis. The crisis the advisory gestured toward, carefully, was a Chinese move on Taiwan.

Kyivstar is the clearest public demonstration of what pre-positioning doctrine produces when it activates: invisible presence for months, then destruction timed to strategic need. Volt Typhoon is the American counterpart at the pre-activation stage: the implants confirmed, the purpose assessed, the timeline not yet determined. The cases are not identical, and the essay is not claiming they are. The claim is more limited and more sufficient: the doctrine that produced Kyivstar is the doctrine that produces Volt Typhoon, the People's Liberation Army has studied it openly, and the readiness window that defines the near strategic horizon is the one around a Taiwan contingency.

This essay argues that the defender's response to that timeline requires an artificial intelligence that is, by the operative definitions of the current AI safety discourse, dangerous. It argues that we need that AI soon. And it argues that the governance regime for that AI (the thing that distinguishes a defensive capability from a weapon turned on the infrastructure it was built to defend) is the part we have not built, and the part the remaining time must be spent building in parallel with the capability itself.

The argument is a tradeoff. Time, control, and outcomes: we cannot maximize all three. Time is not a variable we control. Control and outcomes are what we purchase with whatever time we have. The current framework has spent years optimizing for the wrong kind of control, refusing capability rather than governing it, while the adversary builds the capability we have refused ourselves. The allocation has to change, now, and the governance model that makes the change survivable already exists in adjacent engineering disciplines that the AI safety community has not yet borrowed from.

What we have found is not what is there.

Volt Typhoon is not the whole iceberg. Over 2024 and into 2025, a parallel campaign called Salt Typhoon exposed that PRC-linked actors had been inside at least nine major American telecommunications carriers, including the networks carrying lawful intercept data for federal investigators. Whether Salt Typhoon and Volt Typhoon are the work of the same operational unit has not been publicly confirmed; that they emerge from the same national apparatus, and reflect a consistent doctrinal posture, is not in dispute. The Kyivstar compromise was disclosed only after the destruction, with a dwell time of six months before anyone noticed. The SolarWinds campaign sat undetected in thousands of networks for more than a year. These are the compromises that were caught. Catching them required, in nearly every case, a specific adversary error or a specific defender's lucky look in a specific direction.

The honest prior is that the undetected inventory is substantially larger than the known one. We are cataloguing the adversary's mistakes. We are not cataloguing their footprint.

The targeting is not opportunistic. The People's Liberation Army has published, in open-source doctrinal writing, a concept called systems destruction warfare. The idea: a contemporary adversary cannot be beaten in a direct match against American force projection, so the adversary must be beaten by degrading the civilian systems that force projection depends on before the projection begins. Power grids. Telecom backhaul. Water utilities. Ports and rail. The unglamorous infrastructure that keeps everything else moving. Volt Typhoon is that doctrine executed on schedule.

The defender is fragmented by design. The common shorthand is that "85 percent of American critical infrastructure is privately owned," a number that is frequently cited, imperfectly sourced, and best stated as a range: between half and the large majority, depending heavily on sector. What is not in dispute is the structural consequence. Critical infrastructure is distributed across sixteen designated sectors, each with its own regulator, its own maturity curve, its own budget reality. The PLA targets one adversary. The United States defends as sixteen.

It gets worse at the ground level. Wendy Nather, writing in 2011, coined the term security poverty line: the threshold below which an organization cannot afford, in money or talent or institutional attention, the security posture its threat model actually requires. The rural electric cooperative that keeps the lights on for three counties sits below that line. The regional water utility serving forty thousand households sits below it. The tier-three telecommunications carrier that happens to be the only backhaul to an American Pacific installation sits below it. These organizations do not have a chief information security officer. They do not have a security operations center. Very often they do not have dedicated security staff at all. They have a systems administrator who is also the database administrator who is also the network engineer, and a firewall from 2017. They are the pre-positioning targets, not despite their weakness, but because of it, and because they are what everything else runs on. The most capable military in the world rests, at ground level, on the networks these organizations keep running.

A newer problem is now compounding the old one. The defender's response to sophisticated threats has been, increasingly, to reach for artificial intelligence: AI-assisted detection, AI-powered log analysis, AI copilots for security operations. That response is now under direct, sustained, and accelerating assault. Between mid-2025 and the first months of 2026, every layer of the AI supply chain has seen documented compromise, much of it nation-state adjacent. The full incident shelf runs long enough to be distracting; the shape of it matters more than any individual entry. Model-distribution platforms have been poisoned. Package registries have been hit by self-propagating worms. Inference-layer proxies have been compromised. Agent-framework runtimes sit on architectural vulnerabilities that hundreds of thousands of servers inherit by default. The axios npm package, a transitive dependency across roughly fifty million weekly downloads of Node.js tooling, including most AI frameworks, was compromised in March 2026 by an actor attributed to North Korea. The adversary is not abstractly interested in the tooling we are building the hunter from. They are already inside it.

The most telling of the 2025 incidents is the one that closes the loop. In August, a supply chain attack known as s1ngularity compromised the widely-used nx build system. It was the first documented case of a supply chain attack that actively hunted for installed AI assistants on the developer's machine and then weaponized those assistants (the command-line versions of Claude, Gemini, and Amazon Q) as reconnaissance agents against the developer's own environment. The attackers ran them with permissive flags specifically named, in the tools' own documentation, to disable safety checks. The adversary is using our AI tools against us while the AI safety community debates whether we should have AI tools that could be used against them.

The clock is not hypothetical, even if the exact date is contested. Admiral Phil Davidson, then the head of American Pacific forces, testified to the Senate Armed Services Committee in March 2021 that China could attempt to act against Taiwan within the next six years (the so-called Davidson Window, which lands near 2027). His successors have tightened rather than loosened the assessment. This is not a PLA operational timetable that the United States has obtained. It is a strategic-readiness window defined by American defense officials watching the trajectory of Chinese military modernization. The distinction matters. The window is not when the adversary has committed to act. It is the earliest point at which American planners assess the adversary could act if they chose to. The window defines the period in which defensive investment matters most. It is also the period in which pre-positioned access becomes strategically live rather than merely pre-positioned. Every quarter the AI policy conversation spends refining the taxonomy of permissible refusal is a quarter further into that window.

A fair reader will object here that the capability gap this essay is concerned with has many causes, and that AI safety posture is only one of them. That objection is correct and worth stating plainly. Critical infrastructure defenders lack telemetry. Operational technology environments are fragile and under-instrumented. Asset inventories are incomplete. Small utilities cannot staff what they cannot hire. Legal authorities are fragmented across federal and state lines. Vendor remote access is poorly governed. Response authority during a multi-sector incident is unclear in ways that a crisis will clarify painfully. These are real bottlenecks. None of them are solved by the hunter this essay is arguing for. The argument is not that a governed hunter is sufficient. The argument is that it is necessary: that the other bottlenecks can be addressed, are being addressed, and will continue to be addressed, and that none of those addresses close the specific gap that comes from a defender whose best reasoning tool is structurally prevented from reasoning about the thing the adversary is actually doing. The more careful way to state the AI-policy part of the problem is this: the current frontier AI landscape has not produced a clear authorization path for a high-capability defensive cyber model of the kind this essay describes. Refusal policy, pre-deployment gating, and post-training safety work all discourage the production of such a model by the labs capable of building it, and no alternative authorization path has been built. The hunter is one intervention in a larger defensive program. It is the intervention the current posture does not currently permit to exist.

Safety is a control problem, not a failure problem.

The reason the current approach cannot produce the defender we need is a paradigm mismatch, and it is worth being careful about what that means. The critique of the current AI safety posture that follows is specific. It is not "AI safety is wrong." AI safety, broadly construed, is a serious discipline with serious practitioners. NIST's AI Risk Management Framework is structured around govern, map, measure, and manage functions. Frontier laboratories have published preparedness frameworks with capability evaluations and deployment controls. Responsible scaling policies exist. None of this is caricature territory.

The more accurate characterization of the gap is narrower. Frontier AI safety work has over-indexed on two related strategies (suppressing model outputs that look dangerous in training, and setting pre-deployment capability thresholds that gate whether a model ships at all) while under-developing the post-deployment control structures that would permit high-capability systems to be deployed safely into high-consequence beneficial uses. The first set of strategies governs whether a dangerous capability exists. The second set of strategies governs what happens when a dangerous capability is fielded into the world under known constraints. The defender problem this essay addresses requires the second set, and the second set is where the investment has not gone.

That distinction matters because it identifies what needs to be built, not what needs to be torn down. The argument is not that frontier labs should stop doing capability evaluations or abandon pre-deployment review. The argument is that those activities are necessary but not sufficient, and that the missing layer (post-deployment structural control of fielded dangerous capability) is the layer the defender problem requires. The AI safety community has not built that layer yet. It needs to.

The intellectual foundation for that missing layer is not new. It is control theory. Nancy Leveson, at MIT, argued in a 2004 paper and an influential 2011 book that safety in software-intensive systems is not primarily a failure problem; it is a control problem. Software does not fail in the reliability sense. It most often contributes to accidents not by breaking but by commanding the system into an unsafe state. A flight control system that shuts off descent engines prematurely is not failing; it is doing exactly what it was instructed to do, in a situation where what it was instructed to do is wrong. Making the controller more reliable does not fix this. Only different controls (structural constraints at the levels above the controller) do.

Leveson's discipline (Systems-Theoretic Accident Model and Processes, known as STAMP, along with its applied method STPA) treats safety as what emerges when a hierarchical control structure enforces the right constraints on what its components are permitted to do. A controller at any level of the hierarchy can reason about anything; what the structure permits the controller to act on is what determines whether the system is safe. The method has been applied to space launch vehicles, commercial aviation, nuclear plant concept design, autonomous shipping, radiation oncology, and the security of software-intensive defense systems. STAMP does not solve model weight exfiltration, adversarial prompt injection, or telemetry poisoning; no single framework does. What STAMP provides is a paradigm for how to compose the individual solutions into a governance structure that can survive adversarial conditions. The AI-specific threat surface, which is real in ways STAMP does not address, gets handled by frameworks designed for that surface: MITRE's ATLAS taxonomy, its SAFE-AI control mappings, its CREF resilience engineering work. The integration of paradigm with AI-specific machinery is what produces a governance regime. The integration is what is missing.

For the defender problem this essay is concerned with, the paradigm-level failure is decisive. Capping the defender's reasoning capability, refusing to let the defender's AI think fluently about adversary tradecraft, attacker psychology, offensive tooling, living-off-the-land patterns, is the move that guarantees the defender's AI is structurally blind to what it needs to see. A pre-deployment capability threshold that treats "can reason about offensive tradecraft" as a gating failure condition is the threshold that guarantees the defender's best tool never gets built. This is not a failure of AI safety as a discipline. It is a failure of the specific strategy of output suppression and capability gating to produce the capability the defender problem requires.

A scanner finds bugs. A hunter finds ghosts.

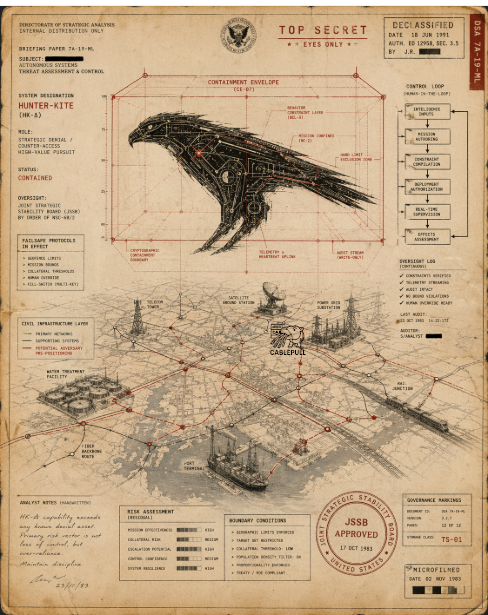

What would a defender capable of closing the gap actually need to do?

It is not vulnerability scanning. Vulnerability scanning finds unpatched weaknesses in software, waiting to be patched. The weaknesses are not the current problem. The implants are already in. Cataloguing outdated web application firewalls is a worthwhile task in principle and rearranging deck chairs in practice: the hull has been compromised for two years.

What the defender needs is a hunter. A hunter is something categorically different from a scanner. Its job is to sit inside thousands of networks simultaneously and notice what a defense staffed by humans cannot notice, because there are not enough humans, and the signal is not in any place a human would think to look. The administrator whose login is real and whose credential is valid, but whose behavior during the login is off by a quarter standard deviation from his pattern over the previous eighteen months. The firmware update that passes its signature validation and takes 180 milliseconds longer to apply than the last seventeen updates of the same size. The lateral movement that looks exactly like routine IT maintenance because the adversary wrote it to look exactly like routine IT maintenance, having first stolen the defender's runbooks.

A hunter has to generate hypotheses no human analyst would generate, because no human has synthesized the complete corpus of adversary tradecraft (every threat intelligence report, every leaked playbook, every public and private profile of every advanced persistent threat group, every piece of open-source offensive tooling) into the kind of intuition a seasoned adversary operator has. A hunter has to reason adversarially. It has to think like the people who already broke in, at the scale those people operated at, inside the networks they are already inside. That is the capability requirement. It is also, precisely, the capability the current framework is designed to refuse.

That is the dangerous AI of the essay's title. It is worth being precise about what it is and what it is not, because "dangerous AI" can mean many things and the argument depends on distinguishing them. There is a capability ladder involved here, and the rungs have very different governance implications.

At the lowest rungs: a model that reasons about adversary tradecraft, generates hypotheses, writes detection logic, maps observed behavior to known attacker techniques, and produces human-readable incident narratives for analysts to review. At the middle rungs: a model with read access to live telemetry (network flow data, endpoint telemetry, identity logs, operational-technology historian data), permitted to query that telemetry and correlate across it. At the higher rungs: a model authorized to propose containment actions (isolate this host, disable this credential, rotate this key) for human review. At the highest rungs: a model authorized to take containment actions autonomously, or to operate inside production control planes.

This essay is arguing for the lower and middle rungs. It is explicitly not arguing for a model with autonomous irreversible action authority, especially not inside operational-technology environments where an incorrect containment action can cause the cascade the hunter is meant to prevent. The capability the argument requires is a hunter that can reason about what the adversary is doing and produce analysis and candidate action that a human authorizes. The higher rungs involve categories of risk the essay does not try to justify, and the governance regime proposed in the next section would not authorize them without dramatically more demonstrated assurance than currently exists.

What does this look like on Tuesday at two in the morning inside a regional water utility with a six-year-old firewall and no security operations center? It looks like this: the utility's telemetry (whatever they have, whatever quality) flows into a shared regional response center where the hunter operates, scoped by contract and by cryptographic binding to the utilities it covers. The utility itself runs no model. The utility runs its plant. An anomalous successful login from a vendor remote-access account arrives outside the vendor's normal maintenance window. The hunter, reading the utility's telemetry from inside the regional enclave, correlates it with a firmware update applied two days earlier, notes a timing anomaly in that update, flags a pattern consistent with a known adversary playbook, and pages a human analyst at that same regional center, which is staffed by a small team covering dozens of such utilities. The analyst reviews the finding, contacts the utility's on-call operator, and walks them through a containment step executed on the utility's own existing tooling. The hunter does not execute it. The utility does not host it. The regional center is where the capability lives; the coverage is what the utility receives. The outcome this argument is buying is the existence of that page at two in the morning, in an organization that today has no one watching and no tool that could find what was there.

This is the dangerous AI. Not an autonomous weapon. Not a digital operator acting on its own judgment inside critical systems. A reasoning tool whose reasoning surface extends into territory the current framework has placed off-limits, governed into a structure where its analysis becomes action only through human authorization, and scoped to the defender's network by construction. It is dangerous in one specific sense: it knows how to think like the attacker. It has to.

A careful reader will notice a tension here that deserves to be stated directly. If the hunter in deployment is a read-heavy analytical tool with human-authorized action, then why does the essay later argue for a weight-level control regime that treats the model as strategically dangerous? The two pictures do not cancel each other. They describe two different properties of the same artifact.

What the hunter does in deployment is constrained by the governance regime: scoped telemetry access, human-authorized action, cryptographically-bounded network scope, no autonomous irreversible operations. That is the modest deployment picture, and the essay stands by it. What the hunter is at rest (the weights themselves) is a different object. Those weights would concentrate and operationalize cyber-offensive reasoning in a form no current commercial model offers: adversary tradecraft, attacker reasoning patterns, exploit development logic, operational planning, all trained and assembled into something coherent rather than scattered across a thousand blog posts and conference talks. The argument is not that frontier models know nothing of this material; they know plenty. The argument is that the material has not been deliberately concentrated, fluency-trained, and made operationally reliable in a single artifact, because current policy specifically disfavors producing such an artifact. A defender built by producing it would, released into open distribution, materially reduce the skill barrier for misuse. The governance regime has to hold because the weights compress capability that would otherwise require an experienced human operator to wield.

This is the distinction that reconciles the two pictures. The hunter is a modest analytical tool in deployment. The weights of the hunter are a strategic asset at rest. The governance regime has to protect both conditions simultaneously: scoping what the deployed tool does, and preventing what the model contains from leaving the regime. The two problems are related but not the same, and the controls that address each are not the same either.

Time, control, outcomes. Pick all three and you lose.

An essay arguing for a costly, risky, one-way capability has to answer the obvious question: at what cost, and to what end?

There is a shape to this that is worth pausing on, because once the shape is named, everything else in the argument follows. Three things are in tension, and they will not resolve in the defender's favor unless we are honest about which of them we actually control.

The first is time, and time is the thing the defender does not hold. American defense officials have publicly assessed that China could have the capability to act against Taiwan within a window that lands near 2027. The adversary decides when, or whether, pre-positioned networks activate. The AI supply chain is being compromised at a cadence the defender did not set and cannot slow. The clock is running on someone else's watch. Staring at it does not reset it.

The second is control: the governance regime around any capability we build. Control is the axis investment actually moves. Every dollar, every statute, every accredited assessor, every line of cryptographic attestation, every criminal liability provision, every export restriction, every operator vetting program either exists or does not. Control is what the United States can choose. It is also, bluntly, the axis the AI safety community has spent the last several years choosing badly.

The third is outcomes, and outcomes, plainly, are what the first two produce. They are what we end up with. An adversary who paralyzes water utilities on a Tuesday, or an adversary who looks at the problem of paralyzing water utilities and decides today is not the day. A defender who sees the ghost in the network, or a defender who stares at a dashboard of green checkmarks while the implants wake up sector by sector. The defender's purpose, in this conversation, is not victory in a war that starts in 2027. The defender's purpose is to make that war uncertain enough that it does not start.

These three things compete. You cannot have all of them at full measure. Perfect control plus the outcomes we need would require time we do not have. Fast outcomes without control is reckless in a way the 2027 reader will remember for the rest of their life. The current default (maximum control interpreted as maximum capability-capping, at the cost of any meaningful outcome) is itself a choice. It is the choice the United States is currently making. And the consequences of that choice are visible in every incident on the accompanying shelf.

There is a serious objection to the argument that deserves a direct answer, not a footnote. The AI tooling layer is, as the previous section documented, currently under sustained compromise. If the defender's own tools are the adversary's reconnaissance vector, as the s1ngularity attack demonstrated at scale, then a hunter deployed at scale into ten thousand underdefended utilities may not be a defender. It may be the adversary's most efficient persistence mechanism yet: a pre-positioned instrument fielded by the defender, under the defender's own authority, already inside every network the adversary would otherwise have to compromise individually.

This objection is real and it is the hardest version of the argument to answer. The answer has two parts, and neither is reassuring. The first part is that the objection applies with equal force to every AI-based defensive tool the industry is currently fielding, not only to the hunter this essay is arguing for. Every endpoint-detection platform that now uses a language model, every security-operations copilot, every AI-assisted log analyzer that a managed security provider sells to the same small utilities, all of them face the same tooling-compromise exposure, and all of them are being deployed anyway. The hunter is not a special case of this risk. It is the general case made visible. Refusing to build the hunter does not solve the tooling-compromise problem. It only exempts the hunter from it while leaving every other AI-based defensive tool in the same exposed position.

The second part of the answer is that the hunter's governance regime, if built as this essay proposes, is specifically designed to address the tooling-compromise risk that the rest of the industry's AI tools currently do not address. The cryptographic attestation of every deployment, the continuous third-party assurance of the operating environment, the extension of mechanical enforcement down through the model-hosting and agent-runtime layers. These are the things the current AI-tool market does not have, and they are precisely the things that would make a hunter deployment more trustworthy than the AI-assisted detection platform a small utility is running right now. The hunter is not safer than the status quo in the abstract. The hunter is what the status quo would need to become in order to be worth deploying at all. This is an uncomfortable observation, but it is the observation the tooling-compromise objection forces.

The specific outcomes this argument is spending time and control to purchase are worth naming plainly.

First: coverage of the underdefended. The rural electric cooperative and the regional water utility should be inside the hunter's coverage envelope, not because they are sophisticated operators who will manage the deployment well, but because they are where the adversary already is. Coverage of the organizations sitting below the security poverty line is the outcome the Fortune 500-oriented security market has systematically failed to produce, and the governance regime that distributes the hunter has to solve the distribution problem the market has not.

The word "distributes" deserves a moment, because the deployment architecture this essay proposes is not what the reader is likely imagining. The hunter is not shipped as weights to ten thousand underdefended utilities, each running a local instance with local credentials and local logs, each a potential leakage vector, each a small lab's worth of operational-security exposure. That architecture would be unworkable on its face; the governance regime could not hold. What the regime distributes is coverage, not the model. The weights live in a small number of tightly-controlled enclaves: cleared facilities, regulated cloud environments, regional response centers operated under the public-private evaluation regime described later in this essay. Telemetry flows inward from the covered operators. Findings flow outward. The rural cooperative does not receive a copy of the hunter; the rural cooperative is covered by analysts who receive the hunter's findings about the cooperative's own network. The water utility does not operate the tool; a regional response center does, on the utility's behalf, under clearly-scoped authority. The deployment looks less like endpoint software and more like the way managed detection and response already works for enterprises that can afford it, scaled down in cost and up in governance, extended to the organizations that currently have nothing. That is how the argument for coverage of weak operators does not collapse into an argument for the democratization of a dangerous model. The model is not democratized. The coverage is.

Second: collapse of the adversary's dwell-time advantage. The pre-positioned implants rely on long, quiet presence between the moment of compromise and the moment of activation. The hunter's purpose is to compress that window, to transform the adversary's strategic dwell time, measured in months of unseen presence followed by synchronized activation, into tactical dwell time, measured in hours of detected presence followed by scoped and contained response. The hunter does not prevent the adversary from getting in. The hunter changes what happens between the getting-in and the damage.

Third: preservation of strategic options. American deterrence in the Pacific is not a narrow military question. It is a composite of satellite infrastructure, ground stations, launch cadence, the power generation that keeps those ground stations running, and the telecommunications carriage that moves the data those satellites produce. Every layer of that stack depends on civilian networks that sit under the threat the hunter is meant to address. If the civilian networks fall, the satellites fall with them, in the sense that the satellites become operationally useless regardless of whether any hostile action has been taken against the satellites themselves. The hunter's strategic purpose is to preserve the ability to project power through space-based assets by defending the terrestrial systems on which those assets depend. This is the reframe that moves the essay from a cybersecurity argument to a strategic one.

Fourth: deterrence itself. Not victory in the coming conflict. A shift in the adversary's calculus about whether initiating the conflict produces the outcome they expect. An adversary whose theory of success depends on paralyzing civilian networks on a strategic timetable looks differently at initiation when that paralysis cannot be assumed to succeed. The hunter's purpose is to introduce uncertainty into that calculation. Whether the uncertainty is enough to change the adversary's decision is not something this essay can prove; adversary decision-making under strategic pressure is precisely the kind of thing that cannot be modeled to the satisfaction of a skeptical reader. What can be said is that the current posture does produce some uncertainty: Volt Typhoon was detected and publicly exposed, federal agencies have disrupted associated infrastructure, advisories have been issued and guidance published. Still, the uncertainty it produces is insufficient across the underdefended layer where pre-positioning is most likely to matter. The adversary's pre-positioning can still be assumed to succeed at scale precisely because the small utility and the regional cooperative sit below the detection threshold the current posture actually reaches. Moving that threshold downward is what the hunter is for. Whether the resulting shift is enough to change adversary behavior is an honest open question. What is not an open question is that the current floor is too high.

None of these outcomes are available under the current framework. All of them require the dangerous AI this essay is arguing for. All of them require the governance regime the essay argues for next.

Creativity stochastic. Consequence bounded.

The claim of this section is that the governance regime that makes the hunter survivable is not new. It is an integration of existing disciplines, most of which the AI safety community has not yet adopted. Nothing in the proposal is invented. The novelty is the recognition that disciplines designed for rockets, nuclear plants, lawful intercept, and radiation oncology already solved the problem that AI governance is currently treating as unprecedented.

The foundation is control theory applied to the AI system, in Leveson's sense. The hunter is one controller among several: the human operator is above it, the operating organization is above the operator, and regulatory authority is above the organization. Each level enforces constraints on the level below. The AI-specific threat surface (weight exfiltration, training data poisoning, inference-layer compromise, prompt injection) is handled by existing MITRE frameworks designed for exactly this purpose. Resilience under sustained pressure draws on engineering disciplines that long predate the current AI moment. The integration produces six structural primitives described in the accompanying sidebar. The primitives are implemented, not chosen. Each one is a property of the runtime the hunter operates within, of the cryptographic attestation around its deployment, of the auditable lineage of its actions, of the legal regime under which it operates. None of them depend on the hunter's own values or on the hunter being trained to refuse. The argument of control theory, and the argument of this essay, is that structural controls work where behavioral training does not.

The governance layer is what distinguishes this from the "just build it and see" posture the essay is sometimes mistaken for. The proposal is explicit: a combined public and private sector evaluation regime, in three layers.

The first layer is public-sector pre-deployment evaluation. A capable government authority (in the United States, the Center for AI Standards and Innovation is the current candidate, with its equivalent partners in allied nations) conducts rigorous pre-deployment evaluation of the hunter's capabilities, behavioral envelope, and failure modes. The output is a public finding: what the hunter does, under what conditions, and what constraints its deployment must satisfy. This is Leveson's method applied at the policy layer. The function must exist and be properly resourced regardless of which administration owns it.

The second layer is private-sector deployment assurance. The six primitives are implemented by qualified builders and operators, with continuous attestation performed by accredited third parties (the defensive-AI analog of the NIST-accredited lab regime, or the FedRAMP third-party assessment organization structure). This is an existing model applied to a new domain, not an invented one. The emphasis on continuous attestation rather than annual audit matters: the deployed hunter is a living artifact under ongoing assessment, not a certified snapshot.

The third layer is hybrid enforcement. Federal statutory authority handles what only a government can handle: export controls on the weights themselves, criminal liability for misuse, personnel vetting for operators of the highest-sensitivity deployments. A private-sector consortium, modeled on the Institute of Nuclear Power Operations, handles operational norms, incident sharing, and coordination across defenders. Nuclear power is a useful analog here not because the hunter is a nuclear weapon but because nuclear power is the clearest domestic precedent for governing a civilian-operated, privately deployed, dual-use technology with catastrophic failure modes, through a combination of strict federal authority and operational consortium norms. The analogy has real limits. Nuclear power has a small number of operators running heavily engineered physical plants under mature physical regulatory inspection, while the defender regime envisioned here involves thousands of heterogeneous operators, opaque software dependencies, and exploit cycles measured in weeks. The nuclear model offers a pattern, not a template. It gives the governance conversation somewhere to start that is not a blank page, and that is worth more than its limitations subtract.

There is something worth saying plainly about those export controls on weights, because they are not hypothetical and the problem they address is not abstract. American artificial intelligence laboratories have already had researchers caught exfiltrating proprietary model materials to foreign entities, and the prosecutions are recent. In January 2026 a federal jury in San Francisco convicted Linwei Ding, a Google software engineer who had spent four years inside the company's supercomputing data centers, on seven counts of economic espionage and seven counts of theft of trade secrets. The FBI called it the first AI-related economic espionage conviction in American history. Over the course of a year, Ding had copied more than two thousand pages of Google's proprietary AI infrastructure documentation (the architecture of Google's TPU and GPU systems, the software that orchestrates thousands of chips into a supercomputer, the platforms that train and serve the largest models) into Apple Notes on his company laptop, converted them to PDFs, and uploaded them to his personal cloud account. He did this while quietly taking the CEO role at a Chinese startup he was pitching to Beijing investors as a replica of Google's supercomputing platform. It took Google eighteen months to notice. They noticed because he slipped up, not because their security did its job.

The Ding conviction is one case. It is not the only one, and it will not be the last. The pattern (state-linked actors placing researchers inside frontier American laboratories, waiting, copying, exfiltrating) is well enough documented at this point that the general counsel of every major lab has a drawer with something like it in it. The question is not whether weight theft happens. The question is whether the country treats it as the serious federal offense it is, or whether we keep pretending the only way a capability leaves a laboratory is through a deliberate export decision the laboratory itself makes.

And there is a harder problem sitting underneath the insider threat, which is that once a sophisticated model is released into the open, even legitimately, even under a permissive license, and it is not coming back. In January 2025 a Chinese laboratory called DeepSeek released an open-weight reasoning model, R1, under the MIT license, with distillation explicitly permitted. Within weeks, an open community at Hugging Face had produced a full reproduction of the distillation pipeline. Within months, R1 and its descendants were running locally on consumer hardware for anyone who wanted them. Whatever the model knew, whatever reasoning capability it had, was now, in a durable and irreversible sense, everyone's. There is no recall. There is no retraction. There is no jurisdiction in which you can meaningfully ask for the weights back. The cat is out, the bag is empty, and the people who might want the cat have the cat.

Sit with that for a moment. The hunter this essay is arguing for is, by construction, more dangerous than DeepSeek R1. Its whole reason for existing is that it can reason fluently about offensive tradecraft at a level the current AI safety framework has deliberately refused. If those weights ever leave the regime (through an insider, through a breach, through the thousand small accidents that happen at scale), there is no calling them back. The governance regime described in this essay is doing two jobs, and the second one is harder than the first. The first is preventing deliberate export. The second is preventing leakage while the capability still matters.

This is where the history of cryptographic export controls earns its place in the argument, though the analogy carries known disanalogies that should be named rather than hidden. In the 1990s the United States classified strong cryptographic software as a munition under the International Traffic in Arms Regulations. It was a federal crime to export RSA implementations above certain key sizes. For roughly a decade the regime was strategically important, and during that decade it was enforced with something like seriousness. Then, in the early 2000s, the regime was relaxed, not because it had always been wrong, but because the capability had been democratized globally, the strategic calculus had shifted, and the controls were producing more harm to American industry than benefit to American security. Critics of that regime, correctly, point out that it was leaky during its operation, economically costly to American software firms, and ultimately overtaken by global diffusion. All of that is true. What the regime nevertheless demonstrates is that the United States has, within living memory, run a severe export-control program on dual-use software, sustained it through a specific strategic period, and then relaxed it in an orderly way when the strategic logic expired. The regime had a lifecycle. The lifecycle is the lesson.

The analogy should also be explicit about what is not being imported from that history. Not key-size stupidity: no threshold on model parameter counts or training compute chosen to feel restrictive without mapping to actual capability. Not broad criminalization of defensive research: security researchers studying adversary tradecraft should not be the target population, and any regime that produces a chilling effect on defensive work has been mis-designed. Not permanent suppression: the sunset criteria are part of the design. Not unilateral American disadvantage: the regime must be structured so that American defenders do not end up more restricted than the adversaries they are defending against.

There is one more distinction the analogy forces, and it is the one that most determines whether the regime can work at all. Export controls can restrict weights, restrict compute, restrict services, restrict technical assistance. They cannot restrict knowledge: the conceptual understanding of adversary tradecraft, the intuition of a skilled operator, the published literature of threat intelligence. Once the methods are understood, the methods are understood. A thoughtful reader will ask, reasonably enough, why controlling weights matters at all if knowledge diffuses regardless. The answer is the whole reason weights are the control point in the first place. Weights compress knowledge into operational capability. They take what would otherwise require a senior red-team operator, years of tradecraft, and hundreds of hours of planning, and they deliver it as an inference call. They lower the skill floor. They collapse time-to-effect. They make high-skill offensive reasoning available to lower-skill actors in volumes the expert labor market cannot match. The regime does not propose to prevent the eventual reproduction of the capability. It proposes to preserve, for a defined period, an asymmetry in which American defenders can reach that capability while a broader class of potential misusers cannot. Buying time is what this kind of control does. Buying time is exactly the thing that matters during a strategic window.

That is the shape the proposal here takes. Weight-level export controls, criminal liability for exfiltration, personnel vetting for operators of the most sensitive deployments, interdiction authority against known theft operations: all of it, as severe as the situation demands, lasting only while the strategic window is the governing constraint. The regime is not proposed as permanent. It is proposed as time-bounded, tied to a strategic window whose closing conditions are visible and whose expiration is part of the design. Cryptography policy learned how to do this. The AI policy conversation has not yet tried.

"2027 window closes" is not by itself enough to justify sunset, because the window closing is a temporal marker rather than a condition. The regime needs explicit termination criteria: the things that, if true, make the regime no longer necessary or no longer proportionate. A defensible list includes: comparable defensive capability has diffused widely enough that restriction no longer confers asymmetric advantage; critical-infrastructure telemetry coverage has reached a defined threshold such that the hunter no longer represents the marginal difference between detection and blindness; the adversary's pre-positioning doctrine has either succeeded and been contained or shifted to vectors the hunter does not address; the regime has produced documented leakage or misuse sufficient to make continuation worse than termination; a statutory sunset date has been reached and reauthorization either occurs on evidence or does not occur at all. These are the conditions under which the regime should end. Naming them is part of the regime, not an afterthought to it. A control regime that does not know how it ends is a control regime that does not end.

This hybrid approach answers the question that the current consortium-gated model does not: who holds the leash, and by what authority? The answer: the public sector certifies the capability, the private sector implements the controls, the authority is statutory, and accountability is distributed. Twelve organizations selected by a single laboratory is replaced by a regime in which any qualified operator can field the capability, under certification that is public, renewable, revocable, and, when the window closes, relaxed.

Honest arguments name what they cost.

The hunter is a one-way ratchet. Once built and distributed, the capability changes the threat surface for everyone, including the builders. There is no undo button. The argument of this essay is a bet that governed distribution before the window closes beats ungoverned adversary advantage after it closes. That bet could be wrong. It is worth naming.

Distribution at scale is an unsolved problem even when the architecture is right. Concentrating the hunter in a small number of regional enclaves is the architectural answer to the weights-leakage exposure of ten thousand independent deployments, but concentration creates its own risks: a single compromised enclave is a worse incident than any single compromised utility, and the operators of those enclaves become the highest-priority insider-threat targets the American AI supply chain has yet produced. The governance regime has to do real work there, not symbolic work. Personnel vetting, cleared-facility protocols, continuous attestation of the enclave's operating environment, compartmentalized access within the enclave, independent red-team testing of the enclave rather than merely the model: all of it, at the level of seriousness the consequences require. Concentration reduces the number of leak vectors. It raises the consequence of each remaining vector. The framework has to acknowledge both halves of that trade and build against the larger one.

The tooling itself is under active compromise. The incidents catalogued in the accompanying sidebars are not theoretical. Building a trustworthy hunter requires trustworthy model hosting, trustworthy package registries, trustworthy orchestration, trustworthy agent protocols. None of those currently exist at the level the hunter would require. The architectural vulnerability disclosed in the Model Context Protocol's official SDKs in April 2026 affected one hundred and fifty million downloads of software sitting in precisely the layer the hunter would run on. Building the hunter responsibly requires rebuilding the tooling underneath it first (the model distribution layers, the dependency managers, the agent protocols), which means the governance regime must extend down the stack further than the AI safety conversation has so far been willing to go.

"Trustworthy" is not a word that can stand alone here. What the regime would actually require, as minimum categories, includes: cryptographically signed model artifacts with verified provenance; reproducible builds of the inference stack; strict dependency pinning with attestation at every layer of the supply chain; isolated tool execution with no arbitrary code paths available to the runtime; hardware-backed attestation of the deployment environment; secrets held outside the model's context entirely; immutable audit logs written to infrastructure the model cannot reach; outbound network restrictions preventing the inference runtime from initiating connections to the open internet; a kill switch independent of the model's own cooperation; and independent red-team certification renewed at defined intervals. This list is not a specification. It is the category of requirements any deployment would need to satisfy before the regime's governance primitives are even meaningful. The current AI tooling market is delivering essentially none of these to its customers today. The gap is not just a matter of maturing existing products. It is a rebuild.

And the claim on which everything rests (that the hunter can be meaningfully controlled under the proposed regime) has to be argued, not asserted. The essay argues it by invoking prior art: systems-theoretic safety engineering, nuclear command and control, lawful intercept, the export control regime that governs cryptography and munitions. A reader should leave this essay able to question the argument, not simply convinced by it. A reader who accepts the argument without scrutinizing the control claim has not been persuaded. They have been flattered.

The case for a dangerous AI is the case for a defender we do not currently have, against a threat that is already inside our networks, on a timeline we do not control.

The paradigm reframe is narrower than the theatrical version. Safety is not only the absence of dangerous capability; safety also includes the presence of bounded consequence around dangerous capability. The current AI safety framework has made real progress on the first half of that statement and has not yet built enough of the second half to meet the defender problem at the scale and in the places it matters. That is the specific, repairable gap the essay is about. Not an inverted framework, not a wrong discipline but a missing layer, on top of work that is otherwise sound.

The alternative is not safer. The alternative is choosing, by inaction, a particular strategic outcome: the one in which our own critical infrastructure activates against us while the best defender we could have built (the governed hunter we did not) does not exist to see it.

The clock does not care which paradigm we were using when it ran out. The hunter's purpose is not to win the coming conflict. The hunter's purpose is to introduce, into the adversary's calculus, enough uncertainty about whether infrastructure paralysis actually succeeds that infrastructure paralysis stops looking like the reliable path to a quick strategic victory. That is a more modest claim than winning. It may also be the claim that matters.